| Version 8 (modified by , 13 years ago) (diff) |

|---|

Optimizing performance on gigabit networks

It is easily possible to saturate a 100 Mbps network using an OpenVPN tunnel. The throughput of the tunnel will be very close to the throughput of regular network interface. On gigabit networks and faster this is not so easy to achieve. This page explains how to increase the throughput of a VPN tunnel to near-linespeed for a 1 Gbps network. Some initial investigations using a 10 Gbps network are also explained.

Network setup

For this setup several machines were used, all connected to gigabit switches:

- two servers running CentOS 5.5 64bit, with an Intel E5440 CPU running @ 2.83GHz; the L2 cache size is 6 MB.

- a server running CentOS 5.5 64bit, with an Intel X5660 CPU running @ 2.80GHz; the L2 cache size is 12 MB. This CPU has support for the AES-NI instructions.

- a laptop running Fedora 14 64bit, with an Intel i5-560M CPU running @ 2.66GHz; the L2 cache size is 3 MB. This CPU also has support for the AES-NI instructions.

Before starting, the "raw" network speed was measured using 'iperf'. As expected, iperf reported consistent numbers around 940 Mbps, which is (almost) optimal for a gigabit LAN. The MTU size on all switches in the gigabit LAN was set to 1500.

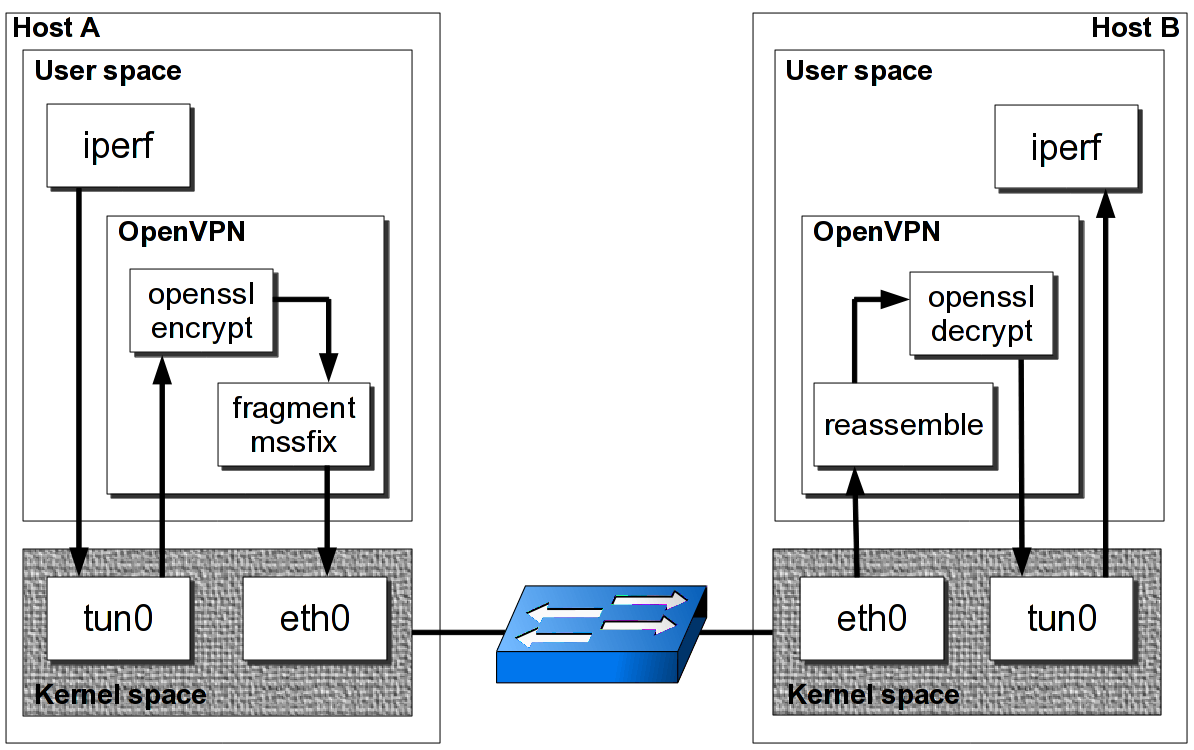

Understanding the flow of packets

It is important to understand how packets flow from the 'iperf' client via the OpenVPN tunnel to the 'iperf' server. The following diagram helps to clarify the flow:

when an 'iperf' packet is sent to the VPN server IP address, the packet enters the kernel's 'tun0' device. The packet is then forwarded to the userspace OpenVPN process, where the headers are stripped. The packet is encrypted and signed using OpenSSL calls. The encryption and signing algorithms can be configured using the '--cipher' and '--auth' options.

The resulting packet is then fragmented into pieces according to the '--fragment' and --mssfix' options. Afterwards, the encrypted packet is sent out over the regular network to the OpenVPN server. On the server, the process is reversed. First, the packet is reassembled, then decrypted and finally sent out the 'tun0' interface.

Standard setup

The default OpenVPN for CentOS 5 currently is 2.1.4; the system OpenSSL version is 0.9.7e.

Using a very plain shared secret key setup for both server (listener)

openvpn --dev tun --proto udp --port 11000 --secret secret.key --ifconfig 192.168.222.11 192.168.222.10

and client

openvpn --dev tun --proto udp --port 11000 --secret secret.key --ifconfig 192.168.222.10 192.168.222.11 --remote server

an iperf result of 156 Mbps is obtained.

By switching to the cipher aes-256-cbc the performance drops even further to 126 Mbps. These results were obtained on the two E5440 based servers.

Tweaked setup

the first tweak made was:

- increase the MTU size of the tun adapter ('--tun-mtu') to 6000 bytes. This resembles JumboFrames? on a regular Ethernet LAN. Note that the MTU size on the underlying network switches was not altered.

- disable OpenVPN's internal fragmentation algorithm using '--fragment 0'.

- disable OpenVPN's 'TCP Maximum Segment Size' limitor using '--mssfix 0'.

openvpn --dev tun --proto udp --port 11000 --secret secret.key --ifconfig 192.168.222.11 192.168.222.10

--tun-mtu 6000 --fragment 0 --mssfix 0

and client

openvpn --dev tun --proto udp --port 11000 --secret secret.key --ifconfig 192.168.222.10 192.168.222.11 --remote server

--tun-mtu 6000 --fragment 0 --mssfix 0

Now an iperf result of 307 Mbps is obtained.

By playing with the '--tun-mtu' size we obtain (all speeds in Mbps)

| MTU | Blowfish | AES256 |

| 1500 | 158 | 126 |

| 6000 | 307 | 220 |

| 9000 | 370 | 249 |

| 12000 | 416 | 252 |

| 24000 | 466 | 259 |

| 36000 | 470 | 244 |

| 48000 | 510 | 247 |

| 60000 | 488 | 221 |

For the default Blowfish cipher the optimal value for the 'tun-mtu' paramters for a link between these two servers seems to be 48000 bytes.

By increasing the MTU size of the tun adapter and by disabling OpenVPN's internal fragmentation routines the throughput can be increased quite dramatically. The reason behind this is that by feeding larger packets to the OpenSSL encryption and decryption routines the performance will go up. The second advantage of not internally fragmenting packets is that this is left to the operating system and to the kernel network device drivers. For a LAN-based setup this can work, but when handling various types of remote users (road warriors, cable modem users, etc) this is not always a possibility.

Attachments (1)

-

OpenVPN-packetflow.png (85.1 KB) - added by 13 years ago.

OpenVPN packet flow

Download all attachments as: .zip